The last year we in DashBouquet attended the field of machine learning and at the end of 2018 achieved some results that we want to share. We trained a neural network to recognize a car by a photo and created this simple demo for illustration.

# The data

The starting point for the task was the Stanford Cars Dataset. Some classes of this dataset contain quite a lot of errors (e.g. models of Audi or Aston Martin are often difficult to tell apart for a human being). So we took only 48 classes from the dataset and cleaned them up. During training, the data was augmented by rotations, reflections and messing around with colors.

# Base models

Sure enough, we applied transfer learning for the task. We used as feature extractors the models that are among the best performing on the ImageNet dataset (ResNet50, Inception V3 and Exception). First, we simply concatenated their outputs and stacked some dense layers on the top. This approach didn’t work particularly well - about 55% accuracy on the test set.

# Approach

I tried to analyze how I recognize a car that I see in the street. The easiest thing to tell about a car is its body type. There are only a few of them and usually, they have distinct features. As a rule, a manufacturer is also not a problem. And recognizing the particular model almost always takes another moment or two. Sometimes it may take a minute if a model is rare. In this case, I look at the features like the shape of the radiator grill or of lamps. These features are decisive, I think. The shape of the doors and windows is also important. And the information about the body type and the manufacturer is certainly also helpful.

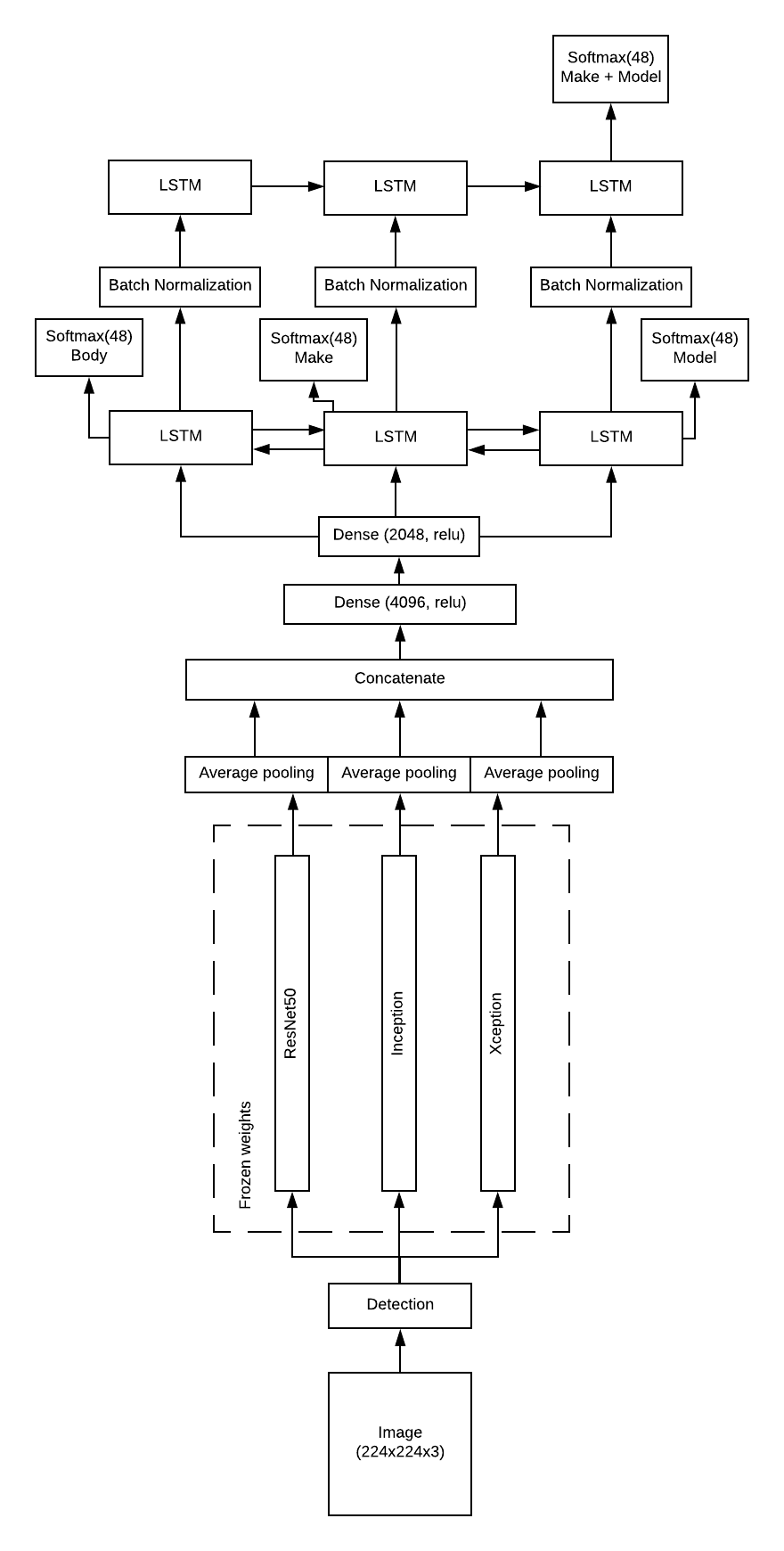

It looked like a sequence to me, so I decided to try an LSTM. It should take the concatenated feature vector yielded by pretrained models and predict body type on the first step, manufacturer on the second and model on the third. And it should be bidirectional because the sequence probably works in the opposite direction as well. At the top of that, another LSTM should gather these predictions together and yield the final prediction of make and model. This approach raised the test accuracy significantly, we got about 72% on the test set.

I have to admit that LSTM is a bit of overkill here. The sequence is short, so an ordinary RNN should do just as well. But LSTM is just as easy to implement in Keras and barely adds a significant lag to the overall evaluation time, so it wasn’t of the highest priority to try and replace the LSTM.

# Tweaks and tricks

Next, we tried to get rid of the redundant features the network might overfit to, like the characteristics of the background. Dropout layers didn’t help a lot, so we used another ready solution provided by the amazing ImageAI library. We applied the yolo model to detect a car on the image and crop it accordingly on the preprocessing step. This gave us other 10% of test accuracy.

The last step was made by removing all dropout layers which gave us 5 more percent. It surprised me first, but now I think that since we got rid of almost all background after the detection step, the network could infer almost always only relevant features from the input. So the dropouts probably became more harmful than helpful after that.

# Architecture

I think there is no reason to describe the architecture we used any further, so I’ll just provide a diagram

# Analysis

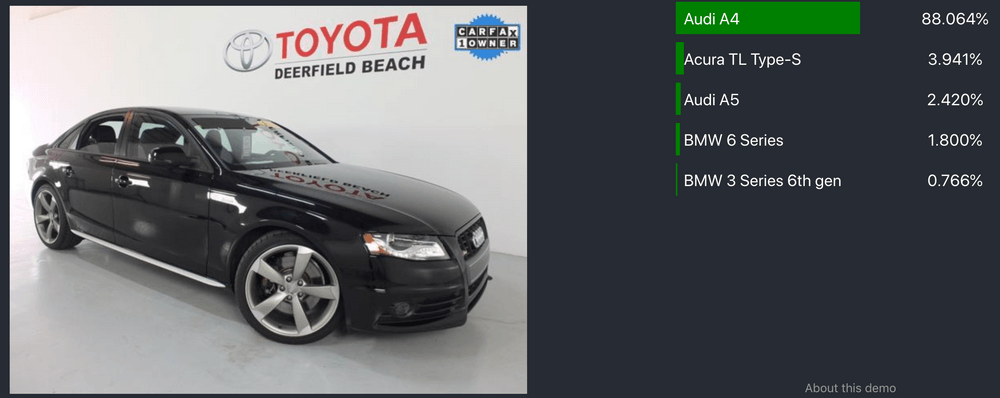

The final result, for now, is 87,5% of top 1 accuracy and 98,5% of top 5 accuracy. Top 1 accuracy means that a prediction with the highest score is correct and top 5 accuracy means that the correct answer is among the predictions with top 5 scores.

Most of the errors the network makes are easy to make. For example, this Audi S4 is confused with an Audi A4 of the previous generation:

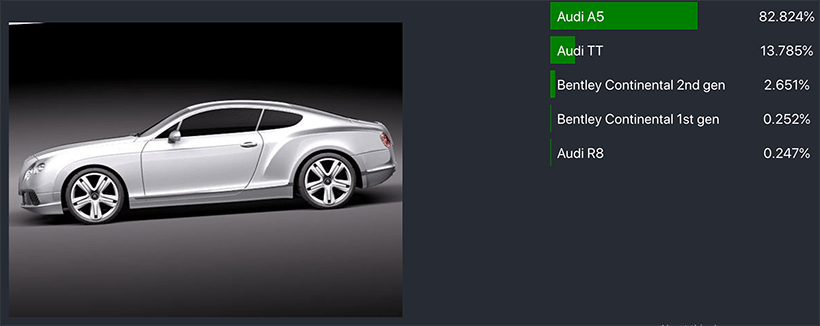

This Bentley Continental is classified as Audi A5:

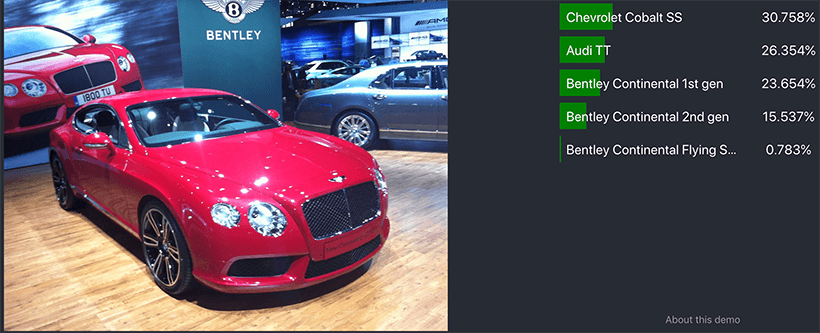

Some errors suggest that the network still takes color into account too much. This Bentley is recognized as Chevrolet Cobalt SS many of which are of red color in the training set:

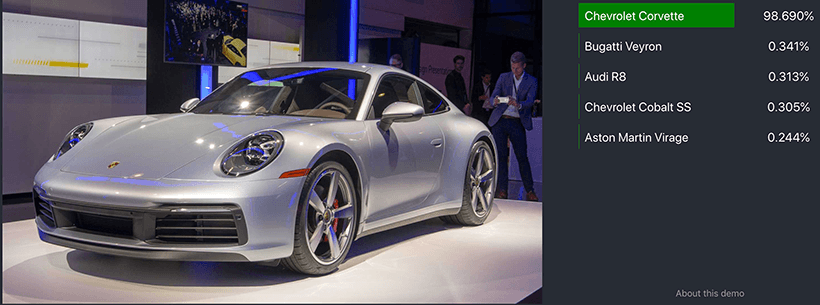

It is interesting how the network behaves when it sees a model that wasn’t in the training set. This Porsche 911 was classified as Chevrolet Corvette which is also a sports car of a similar kind:

And this Honda CRV is classified as Acura ZDX:

Some results are funny though:

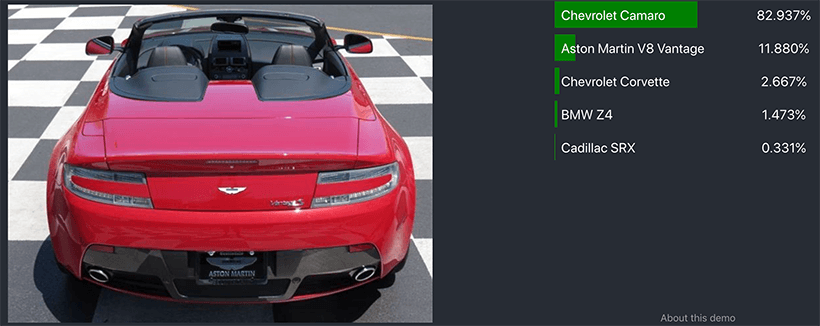

In general, it looks like the network gets the idea of a car on a photo correctly. A sports car is rarely confused with an SUV and a convertible most likely may be confused with another convertible of the same kind:

I think that many problems may go away if we use a bigger dataset. And that is what we are working on right now.

Also, I can’t help thinking that maybe the features we get from the last layers of the pretrained models are too general. It may happen that if we use the earlier layers we get the better results. It was difficult to check while the development was happening on a local machine because the earlier layers are much bigger than the last one and demand much more memory. Now that we moved to Google Cloud Platform and to FloydHub I think, we can give it a try

# Stack

All development was done in Python Notebooks and Keras and deployed using Flask and Docker.

If you are interested, you can find the code on GitHub.